AIDLC : Why Your Software Development Life Cycle is Broken: 5 Impactful Truths About the New AI-Native Era

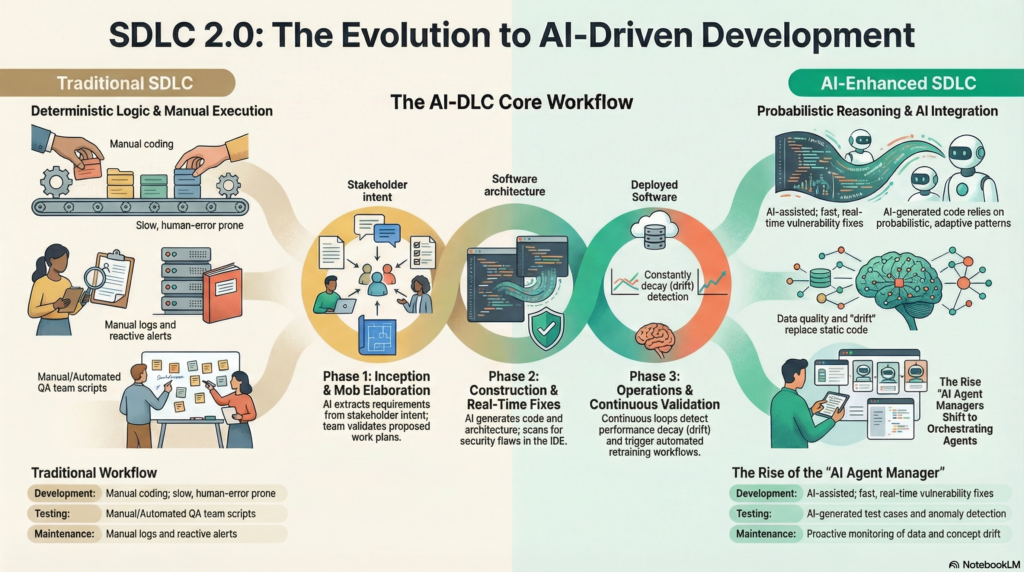

For decades, the Software Development Life Cycle (SDLC) has operated with a familiar, predictable rhythm. Product managers dictate requirements, engineers write the code, and QA teams test the result. This was a deterministic system designed for a world of static logic where identical inputs yielded identical outputs.

That era is over. We are currently trapped in a “Deterministic Delusion.” The “works on my machine” defense has lost its validity because the “machine” no longer follows a procedural script; it reasons probabilistically. We are witnessing a fundamental shift from the traditional SDLC to the AI-Driven Development Life Cycle (AIDLC)—an AWS-pioneered methodology that integrates AI as a central collaborator. For systems involving autonomous, reasoning entities, we must go further into the Agent Development Lifecycle (ADLC), where behavior and reasoning are treated as measurable, governable, and constantly evolving assets.

To succeed in this transition, leaders must dismantle legacy engineering silos and adopt these five impactful truths.

1. AI is Not a Tool, It’s Your Newest Junior Developer

Treating AI as a simple “coding assistant” is a strategic inefficiency that reinforces outdated development models. To truly harness this shift, organizations must view AI as a central teammate. In an AI-DLC, the AI systematically creates work plans, seeks clarification on business intent, and requires the same level of guidance you would afford a human hire.

This shift requires a commitment to “patience and proper mentorship.” Just as you wouldn’t expect a new junior developer to push mission-critical code without oversight on day one, AI systems need an onboarding period to align with organizational standards and context.

“Think of AI tools as junior developers joining your team. Initially, they need guidance and oversight. Over time, they become more autonomous and valuable. Just as you wouldn’t expect immediate perfection from new hires, AI requires patience and proper mentorship.” — Snyk

2. The Death of the “Pass/Fail” Testing Model (AIDLC)

In the traditional SDLC, testing is binary. A feature either meets the static logic requirements or it fails. Because AI-native systems are probabilistic, they do not reason procedurally; two identical prompts can yield different reasoning paths. Consequently, testing is no longer a “phase” that ends before deployment; it is a perpetual discipline of Continuous Certification.

| Aspect | Traditional SDLC (Fixed Logic) | AIDLC / ADLC (Adaptive Systems) |

| Logic Type | Deterministic (Predictable/Static) | Probabilistic (Reasoning/Adaptive) |

| Validation | Static Regression / Pass-Fail | Evaluation Frameworks |

| Security Focus | Security by Code (Vulnerability mitigation) | Security by Behavior (Prompt injection/misuse) |

| Primary Metrics | Functional Correctness | Hallucination, Groundedness, Bias |

| Testing Cycle | Completed before release | Continuous Behavioral Certification |

Success in an AI-native workflow is defined by behavioral outcomes. You must engineer evaluation frameworks that track the distribution of performance across metrics like safety and groundedness. Crucially, the focus shifts from finding bugs in code to mitigating vulnerabilities in behavior, such as goal hijacking or memory poisoning.

3. Engineers are Shifting from “Ticket Pullers” to “Agent Orchestrators”

The traditional loop of pulling a ticket, writing lines of code, and debugging is giving way to the “Rise of AI Agent Managers.” While AIDLC handles the general workflow acceleration, the ADLC specifically addresses the discipline of managing reasoning agents. The engineer’s role is evolving into that of a system architect and technical conductor.

Professional impact is no longer defined by the volume of code produced, but by judgment, technical expertise, and the ability to interpret nuance that machines cannot replicate. Engineers must now orchestrate fleets of agents, reviewing machine-generated output for scalability and ensuring that the agent’s reasoning aligns with the broader system architecture.

“We’ll operate as conductors orchestrating AI capabilities, applying contextual knowledge and ethical judgment that machines cannot replicate.” — Forbes

4. Beware the “Silent Failure” of Model Drift

Unlike traditional software, where bugs manifest as visible exceptions, AI systems suffer from “silent failures.” A model can appear operationally healthy—maintaining acceptable latency and uptime—while its prediction quality quietly deteriorates. This is a critical business imperative that requires engineering sophisticated observability pipelines to capture telemetry across accuracy and drift metrics.

- Model Drift: Changes in prediction behavior where the model’s output distribution shifts over time.

- Concept Drift: A shift in the relationship between input features and the target goal (e.g., changing consumer behaviors or evolving fraud patterns).

Detecting these failures is complicated by “Ground Truth Lag.” For example, in churn prediction, you may not know if a prediction was correct for months. To maintain system health, you must implement these strategies:

- Prediction Drift Monitoring: Tracking changes in output distributions (mean, variance, and class distribution) to identify anomalies without waiting for labels.

- Upstream Data Quality Checks: Assessing the completeness and validity of incoming data to catch pipeline failures before they reach the model.

- Performance Metric Tracking & Proxy Metrics: Comparing predictions against real-world outcomes as they become available. In cases of significant lag, you must utilize proxy metrics—short-term correlated signals—to provide an early warning of degradation.

5. Moving from “Sprints” to “Bolts”

The high-velocity nature of AI-native development renders the traditional two-week sprint obsolete. The AIDLC replaces Sprints with “Bolts”—shorter, intense work cycles measured in hours or days—and replaces Epics with “Units of Work.”

This cadence is sustained through a new architectural requirement: Persistent Context. To ensure work continues seamlessly across Bolts, the AI must maintain a persistent state by storing work plans, requirements, and design artifacts directly in the project repository. This acceleration is supported by two collaborative rituals:

- Mob Elaboration: The entire team validates AI proposals in real-time, transforming business intent into requirements to prevent building based on an “abstract AI interpretation” of the goal.

- Mob Construction: The team provides real-time clarification on technical decisions as the AI proposes logical models and code solutions.

Conclusion: The Symbiotic Future

We are moving from a world of applications that execute commands to a world of agents that reason through problems. This transition does not replace the human element; it elevates it to a position of high-level governance. The organizations that thrive will be those that move beyond “AI-assisted” tasks and embrace a fully integrated AIDLC.

These organizations will build more than just faster software; they will build governed, evolving intelligence. In a world where everyone is now effectively a manager of agents, the ultimate question for the modern engineer is: In a world where everyone is now a manager of agents, what is the unique human value you bring to your next “Bolt”?

https://aws.amazon.com/fr/blogs/devops/ai-driven-development-life-cycle